PDF Format 715 KB

MS Word Format-print friendly 635 KB

Knowledge Translation:

Introduction to Models, Strategies, and Measures

Published By

The National Center for the Dissemination of Disability Research

Table of Contents

- Definitions of Knowledge Translation

- Characteristics of Knowledge Translation

- Knowledge Translation and Evidence-Based Practice

- CIHR Model of Knowledge Translation

- Other Models and Frameworks Applicable to Knowledge Translation

Effectiveness of Knowledge Translation Strategies

- Overall Effectiveness of Implementation Strategies

- Effectiveness of Specific Implementation Strategies

- Evidence in Rehabilitation

Publication Date: August 2007

Copyright © 2007 The Board of Regents of The University of Wisconsin System.

Knowledge Translation: Introduction to Models, Strategies, and Measures was prepared by Pimjai Sudsawad, Sc.D., University of Wisconsin–Madison. This document has been printed and distributed by the National Center for the Dissemination of Disability Research (NCDDR) at the Southwest Educational Development Laboratory under grant H133A060028 from the National Institute on Disability, Independent Living, and Rehabilitation Research (NIDILRR) in the U.S. Department of Education's Office of Special Education and Rehabilitative Services (OSERS).

SEDL's NCDDR project is a knowledge translation project focused on expanding awareness, use, and contributions to evidence bases of disability and rehabilitation research. This NCDDR publication is designed to provide knowledge translation overview information targeted to stakeholders in disability and rehabilitation research. SEDL is an equal employment opportunity/affirmative action employer and is committed to affording equal employment opportunities for all individuals in all employment matters. Neither SEDL nor the NCDDR discriminates on the basis of age, sex, race, color, creed, religion, national origin, sexual orientation, marital or veteran status, or the presence of a disability.

The contents of this document do not necessarily represent the policy of the U.S. Department of Education, and you should not assume endorsement by the federal government.

Suggested citation:

Sudsawad, P. (2007). Knowledge translation: Introduction to models, strategies, and measures. Austin, TX: Southwest Educational Development Laboratory, National Center for the Dissemination of Disability Research.

Introduction

Knowledge translation (KT) is a complex and multidimensional concept that demands a comprehensive understanding of its mechanisms, methods, and measurements, as well as of its influencing factors at the individual and contextual levels—and the interaction between both those levels. This literature review, although not intended to be an in-depth or systematic review of any one aspect of knowledge translation, is designed to bring together several aspects of it from selected literature for the purpose of raising awareness, connecting thoughts and perspectives, and stimulating ideas and questions about knowledge translation for future research of this area of inquiry in rehabilitation. The body of work included in this review was selected from frequently cited and thought-provoking literature and represents a variety of thoughts and approaches that are applicable to knowledge translation.

This paper begins by presenting the definitions of knowledge translation and discussing several models that, together, can be used to delineate components and understand mechanisms necessary for successful knowledge translation. Then the knowledge translation strategies and their effectiveness are explored based on the literature drawn from other health-care fields in addition to rehabilitation. Finally, several methods and approaches to measure the use of research knowledge in various dimensions are presented.

Definitions of Knowledge Translation

Knowledge translation (KT) is a relatively new term coined by the Canadian Institutes of Health Research (CIHR) in 2000. CIHR defined KT as "the exchange, synthesis and ethically-sound application of knowledge—within a complex system of interactions among researchers and users—to accelerate the capture of the benefits of research for Canadians through improved health, more effective services and products, and a strengthened health care system" (CIHR, 2005, para. 2).

Since then, a few other definitions of KT have been developed. Adapted from the CIHR definition, the Knowledge Translation Program, Faculty of Medicine, University of Toronto (2004), stated its definition of knowledge translation as "the effective and timely incorporation of evidence-based information into the practices of health professionals in such a way as to effect optimal health care outcomes and maximize the potential of the health system."

The World Health Organization (WHO) (2005) also adapted the CIHR’s definition and defined KT as "the synthesis, exchange, and application of knowledge by relevant stakeholders to accelerate the benefits of global and local innovation in strengthening health systems and improving people’s health."

At around the same time, the National Institute on Disability, Independent Living, and Rehabilitation Research (NIDILRR) developed a working definition of KT in its long-range plan for 2005–2009. NIDILRR refers to KT as "the multidimensional, active process of ensuring that new knowledge gained through the course of research ultimately improves the lives of people with disabilities, and furthers their participation in society" (NIDILRR, 2005).

Most recently, the National Center for the Dissemination of Disability Research (NCDDR) proposed another working definition of KT as "the collaborative and systematic review, assessment, identification, aggregation, and practical application of high-quality disability and rehabilitation research by key stakeholders (i.e., consumers, researchers, practitioners, and policymakers) for the purpose of improving the lives of individuals with disabilities" (NCDDR, 2005).

Characteristics of Knowledge Translation

A prominent characteristic of KT, as indicated by CIHR (2004), is that it encompasses all steps between the creation of new knowledge and its application to yield beneficial outcomes for society. Essentially, KT is an interactive process underpinned by effective exchanges between researchers who create new knowledge and those who use it. As stated by CIHR, bringing users and creators of knowledge together during all stages of the research cycle is fundamental to successful KT.

Knowledge in KT has an implicit meaning as research-based knowledge. CIHR envisioned that KT strategies can help define research questions and hypotheses, select appropriate research methods, conduct the research itself, interpret and contextualize the research findings, and apply the findings to resolve practical issues and problems. As outlined by CIHR (2004), continuing dialogues, interactions, and partnerships within and between different groups of knowledge creators and users for all stages of the research process are integral parts of KT. Examples of different interactive groups are as follows:

- Researchers within and across research disciplines

- Policymakers, planners, and managers throughout the health-care, public-health, and health public-policy systems

- Health-care providers in formal and informal systems of care

- General public, patient groups, and those who help to shape their views and/or represent their interests, including the media, educators, nongovernmental organizations, and the voluntary sector

- The private sector, including venture capital firms, manufacturers, and distributors

CIHR (2004) stated that the process of KT includes knowledge dissemination, communication, technology transfer, ethical context, knowledge management, knowledge utilization, two-way exchange between researchers and those who apply knowledge, implementation research, technology assessment, synthesis of results with the global context, and development of consensus guidelines. Therefore, KT appears to be a larger construct that encompasses most previously existing concepts related to moving knowledge to use. It is the newest conceptual development that seems to be more comprehensive, more sophisticated, and highly embedded in the actual contexts in which the knowledge applications will eventually occur.

Overall, the characteristics of KT can be summarized in a nonranked order as follows:

- KT includes all steps between the creation of new knowledge and its application.

- KT needs multidirectional communications.

- KT is an interactive process.

- KT requires ongoing collaborations among relevant parties.

- KT includes multiple activities.

- KT is a nonlinear process.

- KT emphasizes the use of research-generated knowledge (that may be used in conjunction with other types of knowledge).

- KT involves diverse knowledge-user groups.

- KT is user- and context-specific.

- KT is impact-oriented.

- KT is an interdisciplinary process.

Knowledge Translation and Evidence-Based Practice

Knowledge translation (KT) is a term increasingly used in health-care fields to represent a process of moving what we learned through research to the actual applications of such knowledge in a variety of practice settings and circumstances. In rehabilitation, the interest in KT (and other concepts about moving research-based knowledge into practice) appears to coincide with the growing engagement in the evidence-based practice (EBP) approach, in which practitioners make practice decisions based on the integration of the research evidence with clinical expertise and the patient’s unique values and circumstances (Straus, Richardson, Glasziou, & Haynes, 2005). Despite a strong endorsement for EBP in rehabilitation and other health-care fields, the use of research for practice continues to be lacking (e.g., Bennett et al., 2003; Meline & Paradiso, 2003; Metcalfe, Lewin, Wisher, Perry, Bannigan, & Moffett, 2001; Turner & Whitfield, 1997). This difficulty has led to increased awareness of the complexity of this process, quests to understand its mechanisms, and attempts to develop strategies that could increase its success.

In past literature, scholars have had different takes on the interchangeable use of KT and other similarly focused terminologies. Some have used KT and other terms interchangeably (e.g., Jacobson, Butterill, & Goering, 2003), whereas others have attempted to tease out the differences between KT and other terms (e.g., Johnson, 2005; Kerner, 2006). That debate lies outside the scope of this paper and will not be discussed here. According to the CIHR’s framework, KT encompasses other related concepts that come before it, and the discussion in this paper will follow that theme, treating related concepts as parts of KT.

Knowledge Translation Models

CIHR Model of Knowledge Translation

CIHR (2005) proposed a global KT model, based on a research cycle, that could be used as a conceptual guide for the overall KT process. CIHR identified six opportunities within the research cycle at which the interactions, communications, and partnerships that will help facilitate KT could occur. Those opportunities are the following:

- KT1: Defining research questions and methodologies

- KT2: Conducting research (as in the case of participatory research)

- KT3: Publishing research findings in plain language and accessible formats

- KT4: Placing research findings in the context of other knowledge and sociocultural norms

- KT5: Making decisions and taking action informed by research findings

- KT6: Influencing subsequent rounds of research based on the impacts of knowledge use

Figure 1 shows a graphical model in which the six opportunities listed here were superimposed on CIHR's depiction of the knowledge cycle.

D

[Large image of Figure 1]

Figure 1. CIHR research cycle superimposed by the six opportunities to facilitate KT

(Source: Canadian Institutes of Health Research Knowledge Translation [KT] within the Research Cycle Chart. Ottawa: Canadian Institutes of Health Research, 2007. Reproduced with the permission of the Minister of Public Works and Government Services Canada, 2007).

Other Models and Frameworks Applicable to Knowledge Translation

The CIHR's KT model offers a global picture of the overall KT process as integrated within the research knowledge production and application cycle. However, the use of other models and/or frameworks with more working details may be necessary to implement each part of the CIHR conceptual model successfully. Such details could be used to augment an understanding of the specific components, chronological stages, and contextual factors that must be taken into consideration to facilitate successful communications, interactions, partnerships, and desired outcomes during each of the KT opportunities. Selected comprehensive models that could be used to augment the CIHR's KT model are described in this section. An effective KT framework requires not only the environmental organizational view, but also the microperspective of an individual (Davis, 2005). Therefore, models and frameworks that represent an individual user's perspective, as well as those that address contextual factors, are included.

Interaction-Focused Framework

Understanding-User-Context Framework: This framework for knowledge translation (Jacobson et al., 2003) was derived from a review of literature and the authors' experience. It provides practical guidelines that can be used by researchers and others to engage in the knowledge translation process by increasing their familiarity with and understanding of the intended user groups. Specifically, this framework can be used as a guide for establishing interactions required by the KT process illustrated in the CIHR's model.

The framework contains five domains that should be taken into consideration when establishing interactions with users:

- The user group

- The issue

- The research

- The researcher–user relationship

- The dissemination strategies

Each domain includes a series of questions. The purpose of these questions is to provide a way to organize what the researcher already knows about the user group and knowledge translation, identify what is still unknown, and flag what is important to know. The following paragraphs and lists describe the foci and sample questions for each domain.

The user group domain focuses on understanding several aspects of the user group, such as the group's operational context, morphology, decision-making practices, access to information sources, attitudes towards research and researchers, and experiences with knowledge translation.

Sample questions for the user group domain are as follows:

- In what formal or informal structures is the user group embedded?

- What is the political climate surrounding the user group?

- What kinds of decisions does the user group make?

- What criteria does the user group use to make decisions?

The issue domain focuses on the characteristics and context of the issue intended to be resolved through the knowledge translation effort.

Sample questions for the issue domain are as follows:

- How does the user group currently deal with this issue?

- For which other groups is the issue salient?

- How much conflict surrounds the issue?

- Is it necessary to possess a particular expertise to understand this issue?

The research domain focuses on the research characteristics, the user group's orientations toward research, and the relevance, congruence, and compatibility of the research (at hand) to the user group.

Sample questions for the research domain are as follows:

- What research is available?

- What is the quality of the research?

- How relevant is the research to the user group?

- Does the research have implications that are incompatible with existing user-group expectations or priorities?

The research–user relationship domain focuses on the description of relationships between the researcher and the user group.

Sample questions for the research–user relationship domain are as follows:

- How much trust and rapport exist between the researcher and the user group?

- Do the researcher and the user group have a history of working together?

- Will the researcher be interacting with the designated representative of the user group? Will that representative remain the same throughout the life of the project?

- How frequently will the researcher have contact with the user group?

The dissemination strategies domain focuses on practical strategies for disseminating the research knowledge.

Sample questions for the dissemination strategies domain are as follows:

- What is the most appropriate mode of interaction: written or oral, formal or informal?

- What level of detail will the user group want to see?

- How much information can the user group assimilate per interaction?

- Should the researcher pretest or invite feedback on the selected format from representatives of the user group before finalizing presentation plans?

As suggested by Jacobson et al. (2003), these questions may help raise awareness of the type of information about the user group that may be useful for the knowledge translation process. The scope in obtaining this information will depend on each knowledge translation circumstance. This framework offers a comprehensive approach to guide the interaction of knowledge creators and knowledge users. However, the focus of these interactions was on the implementation of existing knowledge (K4 and K5 in the CIHR's KT model). Additional frameworks that illuminate the mechanisms, considerations, and influencing factors of the interaction between the knowledge creators and the knowledge users through all steps in the process of knowledge translation are certainly needed.

Context-Focused Models and Frameworks

These selected models and frameworks can be used to understand the contextual factors that could play important roles in the success or failure of the knowledge translation effort and should be taken into consideration in all stages of the KT process.

The Ottawa Model of Research Use: The Ottawa Model of Research Use (OMRU) is an interactive model developed by Logan and Graham (1998). The feasibility and effectiveness of using the OMRU in actual practice contexts was supported by findings from a number of studies (Hogan & Logan, 2004; Logan, Harrison, Graham, Dunn, & Bissonnette, 1999; Stacey, Pomey, O'Conner, & Graham, 2006). The OMRU views research use as a dynamic process of interconnected decisions and actions by different individuals relating to each of the model elements (Logan & Graham, 1998). This model addresses the implementation of existing research knowledge (KT4 and KT5 in the CIHR's KT model).

The model has gone through some revisions since its inception. The most recent version of the OMRU (Graham & Logan, 2004) includes six key elements:

- Evidence-based innovation

- Potential adopters

- The practice environment

- Implementation of interventions

- Adoption of the innovation

- Outcomes resulting from implementation of the innovation

The relationships among the six elements are illustrated in Figure 2.

D

Large image of Figure 2

Figure 2. The Ottawa Model of Research Use

(Reprinted with permission from Canadian Journal of Nursing Research, Vol. 36, Graham, I. D. & Logan, J., Innovations in knowledge transfer and continuity of care, pp. 89–103, copyright © 2004)

According to Graham and Logan (2004), the OMRU relies on the process of assessing, monitoring, and evaluating each element before, during, and after the decision to implement an innovation. Barrier assessments must be conducted on the innovation, the potential adopters, and the practice environment to identify factors that could hinder or support the uptake of the innovation. The implementation plan is then selected and tailored to overcome the barriers and enhance the supports identified. Introduction of the implementation plan is monitored to ensure that the potential adopters learn about the innovation and what is expected of them. The monitoring is ongoing to help determine whether any change in the current implementation or a new implementation plan is required. Finally, the implementation outcomes are evaluated to determine whether the innovation is producing the intended effect or any unintended consequences.

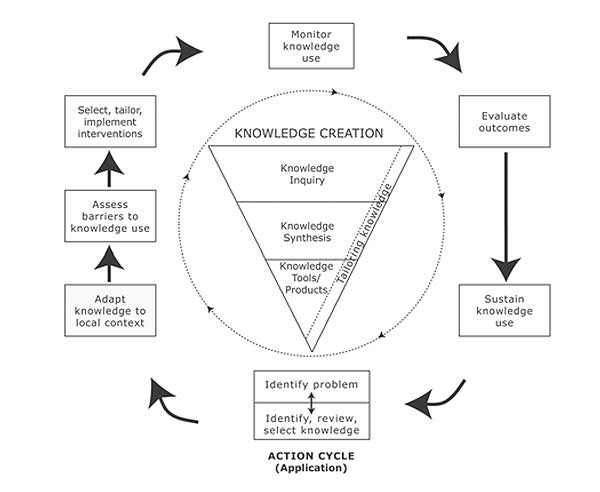

The Knowledge-to-Action Process Framework: Graham et al. (2006) proposed the knowledge-to-action (KTA) process conceptual framework that could be useful for facilitating the use of research knowledge by several stakeholders, such as practitioners, policymakers, patients, and the public. The KTA process has two components: (1) knowledge creation and (2) action. Each component contains several phases. The authors conceptualized the KTA process to be complex and dynamic, with no definite boundaries between the two components and among their phases. The phases of the action component may occur sequentially or simultaneously, and the knowledge-creation-component phases may also influence the action phases.

In the KTA process, knowledge is mainly conceptualized as empirically derived (research-based) knowledge; however, it encompasses other forms of knowing, such as experiential knowledge. The KTA

framework also emphasizes the collaboration between the knowledge producers and knowledge users throughout the KTA process. The visual presentation of the KTA process is shown in Figure 3.

D

Large image of Figure 3

Figure 3. The Knowledge-to-Action Process

(Reprinted from the Journal of Continuing Education in the Health Professions, Vol. 26, No. 1, Graham, I. D. et al., Lost in knowledge translation: Time for a map, pp. 13–24, copyright © 2006, with permission from John Wiley & Sons, Inc.)

Knowledge creation consists of three phases: (1) knowledge inquiry, (2) knowledge synthesis, and (3) knowledge tools/products. Knowledge creation was conceptualized as an inverted funnel, with a vast number of knowledge pieces present in the knowledge inquiry process in the beginning. Those pieces are then reduced in number through knowledge syntheses and, finally, to an even a smaller number of tools or products to facilitate implementation of the knowledge. The authors stated that as knowledge moves through the funnel, it becomes more distilled and refined, and presumably becomes more useful to the stakeholders. The needs of potential knowledge users can be incorporated into each phase of knowledge creation, such as tailoring the research questions to address the problems identified by the users, customizing the message for different intended users, and customizing the method of dissemination to better reach them.

The action cycle represents the activities needed for knowledge application. Graham et al. (2006) conceptualized the action cycle as a dynamic process in which all phases in the cycle can influence one another and can also be influenced by the knowledge creation process. The action cycle often starts with an individual or group identifying the problem or issue, as well as the knowledge relevant to solving it. Included in this phase is the appraisal of the knowledge itself in terms of its validity and usefulness for the problem or issue at hand. The knowledge then is adapted to fit the local context. The next step is to assess the barriers and facilitators related to the knowledge to be adopted, the potential adopters, and the context or setting in which the knowledge is to be used. This information is then used to develop and execute the plan and strategies to facilitate and promote awareness and implementation of the knowledge. Once the plan is developed and executed, the next stage is to monitor knowledge use or application according to types of knowledge use identified (conceptual use, involving changes in levels of knowledge, understanding, or attitudes; instrumental use, involving changes in behavior or practice; or strategic use, involving the manipulation of knowledge to attain specific power or profit goals). This step is necessary to determine the effectiveness of the strategies and plan so they can be adjusted or modified accordingly. During the KTA process, it is also necessary to evaluate the impact of using the knowledge to determine if such use has made a difference on desired outcomes for patients, practitioners, or the system. A plan also needs to be in place to sustain the use of the knowledge in changing environments as time passes.

As stated by Graham and colleagues (2006), the relationships between the action phases within the cycle are not unidirectional. Rather, each action phase can be influenced by the phase that precedes it and vice versa. For example, knowledge not being adopted and used as intended could indicate the need to review the plans and strategies again to improve the uptake of knowledge. The phases in the action process of the KTA are those also presented in the OMRU, as delineated in the previous section.

The KTA process framework presents a more comprehensive picture of KT than the OMRU because it incorporates the knowledge creation process. The framework also incorporates the need to adapt the knowledge to fit with the local context (which has also been indicated in the CIHR's KT model) and the need to sustain knowledge use by anticipating changes and adapting accordingly. However, the KTA process framework does not provide additional details in the knowledge action process aside from what has already been outlined by the OMRU. Nevertheless, it is a comprehensive framework that begins to incorporate the full cycle of knowledge translation from knowledge creation through implementation and impact.

The Promoting Action on Research Implementation in Health Services Framework: The Promoting Action on Research Implementation in Health Services (PARIHS) (Kitson, Harvey, & McCormack, 1998; Rycroft-Malone, 2004; Rycroft-Malone et al., 2002) is a conceptual model describing the implementation of research in practice. According to the model, a successful implementation of research into practice is a function of the interplay of three core elements: (1) the level and nature of the evidence to be used, (2) the context or environment in which the research is to be placed, and (3) the method by which the research implementation process is to be facilitated. These three elements have equal importance in determining the success of the research use. Each of the elements is positioned on a low-to-high continuum, and the model predicts that the most successful implementation occurs when all elements are on the high end of the continuum.

Evidence is defined as a combination of research, clinical experience, patient experience, and local data or information. For each type of evidence, there is a range of conditions from "low evidence" to "high evidence." An example of high evidence would be that the research is valued as evidence, well conceived, and well conducted; the clinical experience is seen as a part of the decision; the patient experience is relevant; and the local data or information is evaluated and reflected on.

Context refers to the environment or setting in which people receive health-care services or the environment or setting in which the proposed change is to be implemented. Context can include physical environment in which the practice occurs; characteristics that are conducive to research utilization, such as operational boundaries, decision-making processes, patterns of power and authority, and resources; organizational culture; and evaluation for the purpose of monitoring and feedback. The PARIHS specifies three key themes under context as (1) culture, (2) leadership, and (3) evaluation. The low-to-high continuum was specified for each theme. A low context would be predictive of unsuccessful research utilization, and a high context would be predictive of successful utilization. An example of a high context would be a culture that values individual staff and clients, the leadership within effective teamwork, and the use of multiple methods of evaluation.

Facilitation is defined as a technique by which one person makes things easier for others. The framework authors believe that facilitators have a key role in helping individuals and teams understand what and how they need to change to apply evidence to practice. The role of a facilitator is an appointed one (rather than one arising from personal influence) that could be internal or external to the organization. The function of this role is about helping and enabling, as opposed to telling or persuading. It can encompass a broad spectrum of purposes, ranging from helping achieve a specific task to helping individuals and groups better understand themselves in terms of attributes that are important to achieve those tasks, such as attitudes, habits, skills, ways of thinking, and ways of working. According to the framework, there are three themes of facilitation: (1) purpose, (2) roles, and (3) skills and attributes. Same as the previous elements, each theme has dimensions of low and high. In PARIHS, high facilitation relates to the presence of appropriate facilitation depending on the needs of the situation.

The PARIHS framework is unique in that it identifies facilitation as one of the main elements in the research utilization process and provides much detail in determining the potential of success based on the prediction structure of the model. However, similar to most models reviewed so far, the emphasis is on the implementation component within the knowledge translation process. PARIHS does not discuss elements or factors related to the knowledge creation process, although creation is also an important part of knowledge translation.

Although the PARIHS model is highly complex and likely to be comprehensive in explaining factors and elements related to the use of research and practice, more demonstration of how the model could be applied in an actual practice environment is needed. The most updated version (Rycroft-Malone, 2004) is substantially more complex than the original (Kitson et al., 1998). All elements were revised based on concept analysis of each element (evidence, context, facilitation) through extracting various dimensions of the elements from extensive literature to form a comprehensive definition and scope. Although doing so has undoubtedly increased the level of complexity of the model, it is not certain how that would affect the actual use of the model as a general framework to guide research utilization in everyday practice settings.

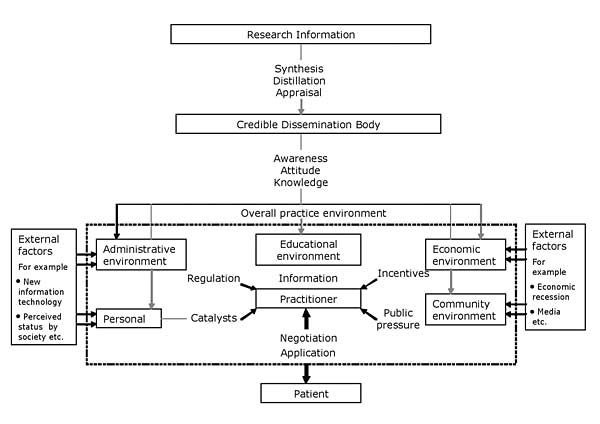

The Coordinated Implementation Model: Lomas (1993) proposed a model of research implementation that outlines the overall practice environment to capture schematically the competing factors of influence to the implementation process. According to the author, this model demonstrates some of the additional and largely unexploited routes through which research information could influence clinical practice. The factors of influence to the implementation process were illustrated in the model diagram in Figure 4.

Large image of Figure 4

Figure 4. The Coordinated Implementation Model

(Reprinted from The Milbank Quarterly, Vol. 71, Lomas, J., Retailing research: Increasing the role of evidence in clinical services for childbirth, pg. 439–475, copyright © 1993, with permission from Blackwell Publishing)

As discussed by Lomas (1993), the approaches used to transfer research knowledge into practice must take into account the views, activities, and available implementation instruments of at least four potential groups. Those include community interest groups, administrators, public policymakers, and clinical policymakers. Although the influences on the use of research from these groups (public pressure, regulation, economic incentives, education, and social influence) are exerted through different venues, they form a system in which, when they work together, the sum of their effects is greater than their parts. This model holds that patients, either as individuals or groups, can also strongly influence practitioners' decisions.

This model helps increase awareness of factors that should be taken into consideration in the implementation effort within the knowledge translation process. Having similar models that outline the contextual factors that could influence the knowledge translation process in other steps would also be extremely helpful and add more to the understanding of the process.

Individual-Focused Models

The Stetler Model of Research Utilization: The Stetler Model of Research Utilization is the practitioner-oriented model, expected to be used by individual practitioners

as a procedural and conceptual guide for the application of research in practice. The model was first developed as the Stetler/Marram Model of Research Utilization (Stetler & Marram, 1976)

and later revised and renamed the Stetler Model of Research Utilization (Stetler, 1994, 2001). The latest version of this model consists of two parts. The first part is the graphic model containing

five phases of research utilization. The second part contains clarifying information and options for each phase. The graphic model is illustrated in Figure 5.

D

Large image of Figure 5

Figure 5. The Stetler Model of Research Utilization

(Reprinted from Nursing Outlook, Vol. 49, Stetler, C. B., Updating the Stetler model of research utilization to facilitate evidence-based practice, pg. 272–279, copyright © 2001, with permission from Elsevier)

The Stetler model was developed as a prescriptive approach designed to facilitate safe and effective use of research findings (Stetler, 2001). The model is based on six basic assumptions:

- The formal organization may or may not be involved in an individual's utilization of research.

- Utilization may be instrumental, conceptual, and/or symbolic.

- Other types of evidence and/or non-research-related information are likely to be combined with research findings to facilitate decision making or problem solving.

- Internal and external factors can influence an individual's or group's view and use of evidence.

- Research and evaluation provide us with probabilistic information, not absolutes.

- Lack of knowledge and skills pertaining to research utilization and evidence-based practice can inhibit appropriate and effective use.

The updated model directs users to be conscious of the types of research evidence and suggests that users seek already published systematic reviews whenever possible instead of using primary studies. The following paragraphs provide descriptions of activities within each of the five phases in the graphic model shown in Figure 5 (Stetler, 1994, 2001).

Phase I focuses on the purpose, context, and sources of research evidence. The practitioner identifies potential issues or problems and verifies their priority; decides whether to involve others; considers other influential internal and external factors, such as beliefs, resources, or time lines; seeks evidence in the form of systematic reviews; and selects research sources with conceptual fit.

Phase II focuses on the validation of findings and includes activities such as critiquing a systematic review, rating the quality of each evidence source, and determining the clinical significance of the evidence. The evaluation is utilization-focused, and the decision is made whether to accept or reject the evidence (rather than using the traditional critique to determine simply whether the evidence is weak or strong). If there is no evidence or the evidence is insufficient, the process will be terminated when the evidence is rejected. Otherwise, the evidence is accepted and the practitioner progresses to Phase III.

Phase III focuses on the four criteria used together as a gestalt to determine whether it is desirable to use the validated evidence in the practice setting. Substantiating evidence is meant to recognize the potential value of both research and additional non-research-based information as a supplement. Fit of setting refers to how similar the characteristics of the sample and study environment are to the population and setting of the practitioner. Feasibility entails the evaluation of risk factors, need for resources, and readiness of others involved ("r, r, r," as stated in the graphic model shown in Figure 5). Current practice entails a comparison between the current practice and the new practice (that may be introduced) to determine whether it will be worthwhile to change the practice. A decision is then made whether to "use," "consider use," or "not use" the evidence considered.

Phase IV focuses on the evidence implementation process, beginning with the confirmation of type of use (conceptual, instrumental, symbolic), method of use (informal/formal, direct/indirect), and level of use (individual, group, organization). Next, the operational details are specified as to who should do what, when, and how. In this phase, a decision is made regarding whether to use or to consider the use of the evidence.

Phase V focuses on the evaluation of the use, with two separate processes to evaluate the case of "use" and the case of "consider use" as decided in Phase IV. The evaluation of the "consider use" option requires pilot testing to enable further evaluation of the feasibility of the change in practice. Pilot data are then used to decide whether the evidence will be formally used. In the case of a "use" decision, the implementation process will be formally evaluated.

This model was originally developed for use with nurses. However, the same principles could conceivably apply readily to other practitioners, including in those in rehabilitation. The Stetler model is highly comprehensive and provides procedures to help guide practitioners through all steps in the research use process while taking into consideration the practical (utilization-focused) aspects of clinical decisions.

Effectiveness of Knowledge Translation Strategies

Effectiveness of Knowledge Translation Strategies

Many strategies related to knowledge translation were reported in the literature. The majority of these strategies focused on the dissemination and implementation of existing knowledge. Only a few studies reported strategies involving knowledge creation. Those studies, however, mainly described the strategies that were used and their successes, but not the measurable outcomes of such strategies.

The evidence on the effectiveness of knowledge dissemination and implementation strategies came largely from studies on physicians, with a much smaller portion coming from other allied health professions, such as nursing, physician assistants, and other staff. In addition, the knowledge targeted for implementation in these studies was not all research-based. However, much can be learned about what might influence changes in providers' practice behavior. Ultimately, the translation of research knowledge into practice implies a need for change in providers' behavior to enable adoption and use of the new research-based knowledge.

Overall Effectiveness of Implementation Strategies

A considerable number of studies are available in the area of implementation strategies' effectiveness, and several systematic reviews of those studies have been conducted. Also available are the overviews of systematic reviews.

Bero et al. (1998) conducted an overview of 18 systematic reviews, published between 1989 and 1995, to investigate the effectiveness of dissemination and implementation strategies of research findings. The systematic reviews were obtained from searches in MEDLINE records from 1966 to June 1995 (the Cochrane Library was also searched, but no relevant reviews were located). To be included, the reviews had to focus on interventions to improve professional performance, report explicit selection criteria, and measure changes in performance or outcome. The researchers found the following consistent themes from their review:

- Most of the reviews reported modest improvements in performance after interventions.

- Passive dissemination of information was generally ineffective in altering practices, no matter how important the issue or how valid the assessment methods.

- Multifaceted interventions, a combination of methods including two or more interventions, seemed to be more effective than single interventions.

Bero et al. (1998) also categorized three tiers of specific interventions based on their level of effectiveness in promoting behavioral change among health professionals: (1) consistently effective interventions, (2) interventions of variable effectiveness, (3) and interventions that have little or no effect. These levels of effectiveness are elaborated below:

- Consistently effective interventions included educational outreach visits for prescribing in North America; manual or computerized reminders; multifaceted interventions such as two or more approaches of audit and feedback, reminders, local census processing, or marketing; and interactive educational meetings.

- Interventions of variable effectiveness included audit and feedback, the use of local opinion leaders, local consensus processes, and patient-mediated interventions.

- Interventions that have little or no effect included educational materials and didactic educational meetings.

The authors concluded that the use of specific strategies to implement research-based recommendations seems necessary to promote practice changes and that more intensive efforts are generally more successful. The authors also indicated a need to conduct studies to evaluate two or more interventions in a specific setting to clarify the circumstances likely to modify the effectiveness of such interventions.

In an updated version of the Bero et al. overview (1998), Grimshaw et al. (2001) conducted an overview of 41 systematic reviews published between 1989 and 1998 to examine the effectiveness of intervention strategies in changing providers' behavior to improve quality of care. Similar inclusion criteria were used, although the searches for systematic reviews were expanded to include the Health Star database and feedback from the Cochrane Effective Practice and Organization of Care group's Listserv of potentially relevant published reviews that might have been omitted. The systematic reviews included in this overview were found to be widely dispersed across 27 different medical journals and covered a wide range of interventions.

Similar to the findings from the original overview (Bero et al., 1998), Grimshaw et al. (2001) found that, in general, passive approaches are ineffective and unlikely to result in behavior change (although they could be useful in raising awareness). Also, as previously reported, multifaceted interventions that targeted several barriers to change are more likely to be effective than single interventions. Specifically, the interventions of variable effectiveness included audit and feedback and use of local opinion leaders, whereas the interventions that were generally effective included educational outreach and reminders. However, most of the interventions were effective under some circumstances, and none were effective under all circumstances.

The researchers noted considerable overlap across the reviews in terms of original studies included, and they found that one reason for such overlap could be the lack of agreement within the research community on the theoretical or empirical framework for classifying interventions aimed at facilitating professional behavior change. In addition, although the findings indicated that multifaceted interventions are more likely to be successful, it is difficult to disentangle which components of such interventions led to success. Therefore, Grimshaw et al. (2001) suggested that more research is needed to facilitate a further understanding about the components contributing to the effectiveness of the multifaceted interventions.

Grol (2001) reported on experiences with more than 10 years of development and dissemination of clinical guidelines for family medicine in the Netherlands, arguably one of the most comprehensive programs for evidence-based guidelines development and implementation in the world. A stepwise approach was used for guidelines development and dissemination. First, a relevant topic was selected by an independent advisory board of practitioners. Second, researchers and practitioners worked together to develop guidelines that were later tested for feasibility and acceptability through a written survey and external reviewers who were specialized on the topic, and the guidelines were then revised. Next, the guidelines were presented to an independent scientific board for official approval. After approval, the final version of the guidelines was published in a scientific journal for family physicians. In addition to the formal publication, the dissemination activities included developing special educational programs and packages for each set of the guidelines and sending them to regional and local coordinators for continuing medical education and quality improvement. Support materials, such as summaries of the guidelines for receptionists, leaflets, letters for patients, and consensus agreements with specialists' societies, were also developed and disseminated.

Several aspects of these experiences were empirically investigated, including knowledge and acceptance of the guidelines, the use of the guidelines, barriers to their implementation, and intervention effects. Grol (2001) concluded that the development of clinical guidelines is feasible and accepted by the target group when such development is owned and operated by the profession itself. Second, a comprehensive strategy to disseminate the guidelines through various channels appears to be very important. Third, a program to implement a guideline should be well designed, well prepared, and preferably pilot-tested for use.

Grimshaw et al. (2004) conducted a comprehensive systematic review of 235 original studies to evaluate the effectiveness and efficiency of guideline dissemination and implementation strategies. Literature searches were conducted in MEDLINE (1966–1998), Health Star (1975–1988), Cochrane Controlled Trial Register (4th ed., 1998), EMBASE (1980–1998), SIGLE (1980–1988), and the specialized register of the Cochrane Effective Practice and Organization of Care group. The studies included in this review employed randomized controlled trials, controlled clinical trials, controlled before-and-after studies, and interrupted time series methodologies. They also included medically qualified health-care professionals, used guideline dissemination and implementation strategies, and used objective measures of provider behavior and/or patient outcome.

The researchers found that the majority of interventions produced modest to moderate improvements in care, with a considerable variation both within and across interventions. Commonly evaluated single interventions were reminders, dissemination of educational materials, and audit and feedback. For multifaceted interventions, no relationship was found between the number of components in the interventions and the effects of such interventions. Fewer than one third of the 309 comparisons within the included studies reported economic data.

Grimshaw et al. (2004) concluded that there is an imperfect evidence base to support decisions about which guideline dissemination and implementation strategies are likely to be efficient under different circumstances. In addition, there is also a need to develop and validate a coherent theoretical framework of health-professional behaviors, organizational behaviors, and behavior change to better inform appropriate choices for dissemination and implementation strategies and to better estimate the efficiency of such strategies.

Effectiveness of Specific Implementation Strategies

Audit and Feedback

To further investigate the features or contextual factors that determine the effectiveness of audit and feedback, Jamtvedt, Young, Kristoffersen, O'Brien, and Oxman (2006) conducted a systematic review to investigate the effects of audit and feedback on both professional practice and health outcomes using 118 randomized controlled trials (RCTs) published between 1977 and 2004. The original studies were located through the Cochrane Effective Practice and Organization of Care register for 2004, MEDLINE from January 1966 to April 2000, and the Research and Development Resource Base in Continuing Medical Education. The RCTs in this review included participants who were health-care professionals responsible for patient care; used audit and feedback as one of the interventions; and objectively measured provider performance in a health-care setting or measured health outcomes. Audit and feedback was defined as any summary of clinical performance of health care over a specified period of time, with information given in a written, electronic, or verbal format.

The findings showed that the effects of audit and feedback on compliance with the desired practice varied from negative effect to a very large positive effect, and the differences in effects were not found to relate to study quality. Some possible explanations offered by the authors for the differences included the level of baseline compliance of the targeted behavior, the intensity of audit and feedback, and the level of motivation of health professionals to change the targeted behavior (not assessed due to lack of sufficient data from original studies). The authors concluded that audit and feedback could be effective in improving professional practice, although the degree of effectiveness is likely to be greater in cases where the baseline adherence to recommended practice is low and the delivery intensity of audit and feedback is high. However, the results do not support mandatory use of audit and feedback as an intervention to change practice.

Recently, Hysong, Best, and Pugh (2006) used a purposeful sample of six Veterans Affairs Medical Centers to further investigate the impact of feedback's characteristics on its effectiveness, comparing facilities with high adherence to six clinical practice guidelines to those with low adherence. Data were obtained through individual and group interviews of all levels of staff at each facility, including facility leadership, middle management and support management, and outpatient clinic personnel. The researchers found four discernible characteristics that distinguished the high- and low-performing facilities: (1) the timeliness with which the providers received feedback, (2) the degree to which providers received feedback individually, (3) the punitive or nonpunitive nature of the feedback, and (4) the customization of feedback in ways that made it meaningful to individual providers. Based on these findings, the authors proposed a model of actionable feedback in which feedback should be provided in a timely fashion; feedback should be about the clinician's individual performance rather than aggregated performance at a clinic or facility level; feedback should be nonpunitive; and the individual should be engaged as an active participant in the process rather than be merely a passive recipient of information.

Tailored Interventions

Shaw et al. (2005) conducted a systematic review of 15 randomized controlled trials, published between 1983 and 2002, to assess the effectiveness of planning and delivery interventions tailored to address specific and prospectively identified barriers to changing professional practice and health outcomes. The pool of studies was retrieved from the Cochrane Collaboration Effective Practice and Organization of Care group's specialized register and pending files until the end of December 2002. Studies in this review were RCTs that included participants who were health-care professionals responsible for patient care; included at least one group that received an intervention tailored to address explicitly and prospectively identified barriers to change; either involved a comparison group that did not receive a tailored intervention or compared a tailored intervention addressing individual barriers with an intervention addressing both individual and social- or organizational-level barriers; and included objectively measured professional performance (excluding self-report). A tailored intervention was defined as an intervention chosen to overcome barriers identified before the design and delivery of the intervention. A meta-analysis method was used to synthesize subsets of the studies when possible.

The authors concluded that the effectiveness of tailored interventions is not conclusive and more rigorous trials are needed before the effectiveness of such an approach can be established. In addition, because of the small number of studies limited to a small number of organizational settings, the authors stated that it was not possible to determine whether interventions targeting both individual and organizational barriers are more effective than those targeting only individual barriers. Based on the review, the authors concluded that decisions about whether the tailored intervention approach is likely to be effective for specific problems should be made based on the knowledge of the problem in each setting and other practical considerations.

Organizational Structures

Foxcroft and Cole (2000) conducted a systematic review to determine the extent to which change in organizational structures would promote the implementation of high-quality research evidence in nursing. Literature searches were performed in the following databases: the Cochrane library, MEDLINE, EMBASE, CINAHL, SIGLE, HEALTHLINE, the National Research Register, the Nuffield Database of Health Outcomes, and the NIH Database up to August 2002 (the review was updated in 2003). Hand searches were performed in the periodicals Journal of Advanced Nursing, Applied Nursing Research, and Journal of Nursing Administration (to 1999). Reference lists of the articles obtained were also checked. In addition, experts in the field and relevant Internet groups were contacted for any other potential studies. To be included in this review, each study had to employ randomized controlled trial, controlled clinical trial, or interrupted-time-series research designs; include health-care organizations (where nurses practiced) as participants and measure and report nursing practice outcomes separately; evaluate an entire or identified component of organizational infrastructure aimed at promoting effective nursing interventions; and use objective measures of evidence-based practice.

Foxcroft and Cole (2000) found that no studies were sufficiently rigorous to be included in the formal systematic review, neither at that time nor when they updated the review in 2003. Therefore, they reported on seven studies that did not meet the design quality required but had evaluated organizational infrastructural interventions to establish the evidence baseline in this area. All of these studies were retrospective case reports. The researchers found that although positive results were reported, there was no offering of any substantive evidence to support the studies' conclusions. Therefore, no clear implication for practice can yet be drawn based on the findings of those studies.

Interactive Strategies

Vingillis et al. (2003) reported the use of knowledge translation strategies that integrated knowledge generation with knowledge diffusion and utilization. During the project's 3-year period, the researchers developed the initial research proposal in response to frustration expressed by local professionals. The researchers also built into the original research proposal a series of three colloquia of potential knowledge users, and they established an open-door policy so that interested parties could request meetings with the team. Vingillis et al. used a partnership culture model in which researchers and potential knowledge users develop a partnership of trust, respect, ownership, and common ground as the fundamental first step to successful knowledge dissemination and utilization. The strategies used by this group included viewing research as the means and not as the end, linking the university and research services to the community, using a participatory research approach, embracing transdisciplinary research and interactions, and using connectors to assist potential knowledge users in identifying knowledge needs and to assist researchers in translating knowledge to users. However, the effectiveness of these strategies in promoting knowledge translation was not reported.

Evidence in Rehabilitation

The evidence to support or dispute the effectiveness of knowledge translation strategies in rehabilitation is very limited and available in the form of original studies only (as opposed to systematic reviews or overviews seen in medicine). Thomas, Cullum, McColl, Rousseau, and Steen (1999), in their searches of primary studies to conduct a systematic review to evaluate the effectiveness of practice guidelines in professions allied to medicine, could not locate such studies in rehabilitation. At present, the studies of knowledge translation strategies' effectiveness in rehabilitation continues to be scarce.

Wolfe, Stojcevic, Rudd, Warburton, and Beech (1997) investigated the uptake of stroke guidelines in a district of southern England using the 18-month audit process. The guidelines were developed through an interdisciplinary collaboration and included rehabilitation standards of services for physiotherapy, occupational therapy, and speech therapy. The guidelines were made available for participating hospitals, although no other specific strategies appeared to have been used to facilitate the adoption of the guidelines. The researchers found that, over the period of 18 months, only modest changes in practice occurred according to the guidelines, and the overall level of rehabilitation service remained low despite the significant increase in occupational therapy services within 72 hours of acute stroke episode. The researchers concluded that despite local development and feedback, adherence to guidelines was relatively poor, there was little improvement over time, and a considerable amount of unmet need continued to exist.

Heinemann et al. (2003) evaluated the effectiveness of a lecture-based educational program about post-stroke rehabilitation guidelines. The knowledge and referral practice questionnaire was used to assess changes in knowledge and practice of physicians and allied health professionals who provided services in acute care settings. The findings indicated no significant increase in knowledge or referrals at 6 months following the educational program. The researchers concluded that simply providing information about the guidelines did not result in an increase in knowledge and referrals.

Fritz, Delitto, and Erhard (2003) conducted a randomized clinical trial comparing the effectiveness of providing therapy based on a classification system matching patients to specific interventions with providing therapy based on the Agency for Health Research and Quality guidelines. At 4 weeks after treatment, the researchers found that the patients who received classification-based therapy showed greater improvement on the general health measure (SF-36) on the physical component score, greater satisfaction, and increased likelihood of returning to full-duty work status. At 1 year, however, there were no statistical differences between the groups in all outcomes.

Pennington et al. (2005) evaluated the effectiveness of two training strategies to promote the use of research evidence in speech and language therapy management of post-stroke dysphagia. The first strategy was the training on critical appraisal of published research papers and practice guidelines, and the second strategy was the same training plus additional training on management of change in clinical practice. The researchers found that, overall, there was no significant change in the adherence to clinical practice guidelines within 6 months of intervention. No other outcomes were measured.

Bekkering et al. (2005), using a cluster-randomized controlled trial, compared an active strategy for implementation of the Dutch guidelines for patients with low back pain with the standard passive method of dissemination of the same guidelines in 113 primary-care physical therapists who treated a total of 500 patients. The passive method group received the guidelines by mail, and the active method group received an additional active training strategy consisting of two sessions of education, group discussion, role-playing, feedback, and reminders. The outcomes were measured through self-reported patients' questionnaires on physical functioning, pain, sick leave, coping, and beliefs. The researchers found that physical functioning and pain in both groups improved substantially in the first 12 weeks but there were no significant differences between the two groups. At a follow-up at 12 months, there continued to be no difference between the two strategies. The researchers concluded that there was no additional benefit to applying active strategy to implement the physical therapy guidelines for patients with low back pain.

Because of the limited evidence, no conclusion or even pattern can be drawn from these studies at this time. The knowledge translation inquiry in rehabilitation appears to be in the beginning stage, and further development in this area is clearly needed. Although some information is available from other health-care fields, such as medicine and nursing, it is not certain how applicable those findings would be for rehabilitation, because of the conceivable difference in practice focus, culture, methods, and contexts.

Measures of Knowledge Use

Background Information

Backer (1993) defined knowledge utilization as "[including] a variety of interventions aimed at increasing the use of knowledge to solve human problems" (p. 217). Backer envisioned that knowledge utilization embraces a number of subtopics such as technology transfer, information dissemination and utilization, research utilization, innovation diffusion, sociology of knowledge, organizational change, policy research, and interpersonal and mass communication.

The use (application) of research knowledge is certainly the goal of knowledge translation. The expected outcome of such application/use would be a positive impact on the health and well-being of the intended beneficiaries (CIHR, 2004; NIDILRR, 2005). The inquiry related to the use (or lack thereof) of research knowledge has been the subject of interest of the multidisciplinary communities since the late 1960s (Paisley & Lunin, 1993). This interest has intensified in recent years, in parallel to increased recognition of the difficulties in moving research to be used in practice.

Types of Knowledge Use

There are three main types of use: (1) instrumental use, (2) conceptual use, and (3) symbolic use. As described by Beyer (1997), instrumental use involves applying research results in specific and direct ways; conceptual use involves using research results for general enlightenment; and symbolic use involves using research results to legitimatize and sustain predetermined positions. Instrumental use has been linked to the decision-making process, in which a direct, demonstrable instrumental use of research is meant to solve clearly predefined problems.

Estabrooks (1999) referred to instrumental use as a concrete application of research, in which research is translated into material in usable forms, such as a protocol that is used to make specific decisions. Conceptual use, according to Estabrooks, occurs when the research may change one's thinking but not necessarily one's particular action. In this type of use, the research helps inform and enlighten the decision maker. Symbolic use, in Estabrooks' view, is the use of research as a political tool to legitimatize opposition or practice.

The conceptual structure of research utilization was investigated empirically in a study with 600 registered nurses in western Canada (Estabrooks, 1999). Using Structural Equation Modeling for data analysis, Estabrooks demonstrated that the instrumental, conceptual, and symbolic research utilization influenced the overall research utilization, with more than 70% of the variance explained. The researcher also asserted that research utilization can be measured with relatively simple questions, as demonstrated by the questions used in this study, which measured each type of research utilization with only one question.

Emerging evidence shows that each type of use should be considered separately, as they may be associated with different predicting factors. In a survey of 833 government officials, Amara, Ouimet, and Landry (2004) found that, in general, the three types of research use simultaneously played a significant role in government agencies and were commonly associated with the same set of factors. However, a small number of factors explained the increase of instrumental, conceptual, and symbolic utilization of research in different ways. Higher conceptual use was significantly associated with the qualitative research products and individuals with graduate studies. Higher instrumental use was significantly associated with research products that focused on advancement of scholarly knowledge and adaptation of research for the user's need. Finally, higher symbolic use was associated with the respondents from provincial government agencies (as opposed to those from the federal government agencies) and also the adaptation of research products to users' needs.

Milner, Estabrooks, and Humphrey (2005), in their survey of nurses in Alberta, Canada, also found that different factors predicted different types of research use. Factors such as having a degree in nursing, attitude, awareness, and involvement in research significantly predicted the instrumental research utilization. Having a degree in nursing also predicted the conceptual research utilization, whereas attitude and awareness did not. Localite communication, on the other hand, significantly predicted the conceptual research utilization but not instrumental research utilization. For symbolic research utilization, attitude, awareness, and involvement in research significantly predicted research use. Mass media were also found to significantly predict symbolic research use, though not other types of use. Certainly, more studies in this area are needed to further understand the subject.

These preliminary findings seem to indicate that it may be beneficial to take a precise, analytical approach when investigating knowledge use. To successfully facilitate the use of knowledge (as it is the focus of KT), it may be essential to predetermine specifically the type of use in which the knowledge user is likely to engage (or a specific use that would be the focus of that knowledge translation effort), consider specific factors that likely influence such use, derive appropriate knowledge translation strategies accordingly, and measure that specific type of use when evaluating the outcome.

Considerations in Measuring Knowledge Use

Early research assumed that utilization occurred when an entire set of recommendations was implemented in the form suggested by the researcher (Larsen, 1980). However, Larsen argued that knowledge can be modified, partially used, used in an alternative way, or justifiably not used at all. Complete adoption of knowledge is generally the exception and not the rule. As Larsen further stated, in some settings, knowledge may not be used if implemented in its original form but may work very well if changed to meet the user's circumstances. A recent example is an implementation study of Constraint-Induced Therapy (Sterr, Szameitat, Shen, & Freivogel, 2006), in which the treatment protocol (as previously developed in research) was locally tested and adapted to fit the needs, preferences, and logistics of the users in a local setting.

In addition, what constitutes an effective use is debatable. Often, the focus is on the use of research as it is intended. However, effective use can occur in ways that are not previously considered (SEDL, 2001). The time line in measuring use—whether it is retrospective or prospective—can also influence the results (Conner, 1981).

Framework in Evaluating Knowledge Use

Knowledge use is not a single discrete event occurring at one point in time. Rather, it is a process consisting of several events (Rich, 1991). Therefore, evaluating the use of knowledge can be complex and requires a multidimensional and systematic approach. Having a framework to guide the process when designing activities to evaluate the use of knowledge can be helpful.

An example of a comprehensive framework that can be used to guide the evaluation of knowledge use is that developed by Conner (1980). Conner proposed a conceptual model for research utilization evaluation, with the emphasis on four general aspects that are important for the evaluator to consider: (1) goals, (2) inputs, (3) processes, and (4) outcomes.

The model is represented by the visual scheme in Figure 6.

D

Large image of Figure 6

Figure 6. Conner's conceptual model for research-utilization evaluation

(Source: Handbook of Criminal Justice Evaluation [M. W. Klein & K. S. Teilmann, Eds.], Conner, R. F., The evaluation of research utilization, pp. 629–653, copyright © 1980, reprinted with permission from Sage Publications)

According to Conner (1980), the first step of evaluating research utilization is to set up goals one would like to achieve as a result of the research-utilization effort. Without goals, it will be impossible to evaluate the success of such effort. The goals for utilization should be set up at the beginning of the research program. However, goal setting is perceived as a dynamic process in which goal changes can occur during the course of the research as better insight emerges. One of the main reasons for setting goals, according to Conner, is to know who will be the primary and secondary targets of the utilization effort. Once the target groups have been identified, they should be consulted at the outset of the research program to make sure that the information will be realistic for use by them and to learn of the type of information that would provide convincing evidence for them to use.

In this model, the primary inputs for a utilization effort are research findings. The research findings need to be evaluated in two aspects: quality and importance. Quality of the research findings relates to their sufficiently high validity and reliability, which could be verified by either consultation with other researchers or replication of the research in a similar setting. Determination of importance can also be obtained in several ways, such as the potential users' rating on the degree of importance; assessing socially significant implications; and assessing the clarity of recommendation for action—that is, whether it can be understood by the users. According to Conner (1980), other inputs should also be considered in addition to the research findings. The materials to be disseminated should be assessed for their appeal, clarity, and appropriateness for the target users. The people who will direct the utilization effort should be assessed for whether their skills and temperament would be suitable to conduct such an effort. As the model was later updated (Kirkhart & Conner, 1983), a consideration for resources—whether there are sufficient resources pertaining to the quality of materials or personnel involved in the planned change process—was added to the input component.

For the process component, Conner (1980) suggested that there is a need to monitor and document the course of dissemination and utilization efforts, particularly because the course could change from what was originally planned. Documenting the deviations from the plan will help in adjusting the evaluation to reflect the program that was actually implemented rather than one that was planned to be implemented. The monitoring includes documenting who received the information to be utilized; their opinions of the information and their judgment of subsequent use of such information (pattern); their reasons for use or nonuse of the information (rationale); and the organizational arrangement and personal state and situation of the potential users (state of utilizers). The attitudes and capability of individuals adopting the innovation were later added to the process component of the model (Kirkhart & Conner, 1983).

The central question of this evaluation process is whether the results of the research are utilized. Conner (1980) indicated that evaluation of outcomes must occur for the type, level, and timing of the utilization process. This information can be determined in part by analyzing who has used the information (level of utilization), how the information was used (type of utilization), and the various time frames of utilization activities (timing of utilization). Outcomes assessment could focus on one of the two target groups: the actual targets (people who have been the direct target of utilization efforts) and the potential targets (people who are most relevant for utilization of the findings although they may not be the direct targets of the utilization efforts). The focus on potential targets is intended to address the issue of self-selection bias that could perceivably occur should the assessment focus on the actual targets, who tend to be more involved.

Conner (1980) concluded that evaluating research utilization has five benefits:

- Determines the effectiveness of the utilization efforts using a critical review process

- Increases recognition of the importance of dissemination-utilization process as a distinct activity rather than just being the last step in a research project

- Increases attention to utilization goals and objectives, which will help in the development of utilization efforts

- Provides more understanding of the process, including what may facilitate and/or hinder research utilization, which will aid in developing conceptual models of the utilization process that can be empirically tested

- Increases the amount of interaction between researchers and users through a neutral third party (presumably the people who conduct the utilization efforts)

Methodologies and Focus of Measuring Knowledge Use

Dunn (1983 ) conducted an 18-month project involving a review of existing literature to develop an inventory of concepts, procedures, and measures available for conducting empirical research on knowledge use. Dunn found that there were widely varied linguistic usages that made it difficult or impossible to compare, contrast, and evaluate essential variations in the concepts, methods, and measures in this area. However, three basic dimensions were found to underlie different concepts of knowledge use: (1) composition, a dimension that distinguishes between individual (for decision making) and collective (for enlightenment) uses of knowledge; (2) expected effects, a dimension that contrasts conceptual and instrumental use of knowledge; and (3) scope, a dimension that contrasts processes of use in terms of their generality (general use of knowledge) and specificity (e.g., use of specific recommendations of a program evaluation). As indicated by Dunn, the scope of use may also be viewed as a continuum ranging from general processes of being familiar with or aware of something to specific processes of being able to explain or perform some action.

More than 60 procedures used to study knowledge utilization were identified through this review and were categorized into three main methods of inquiry: (1) naturalistic observation; (2) content analysis; and (3) questionnaires and interviews. Within the questionnaires and interviews, there were three categories of procedures: (1) relatively structured procedures; (2) semistructured procedures; and (3) relatively unstructured procedures. As reported by Dunn (1983), at least 30 different questionnaires and interviews or schedules were employed to study various aspects of knowledge use, and these were the most frequently used method of the studies included in this review. Naturalistic observation was rarely employed. Content analyses were employed with various kinds of documents, including research reports, case materials, and other records of experience.

Recently, Hakkennes and Green (2006) conducted a structured review of 228 original studies published in peer-reviewed journals to identify the outcome measures used to determine effectiveness of strategies aimed at improving development, dissemination, and implementation of clinical practice guidelines. Three types of data were collected from the included studies: (1) the measures used to assess the effectiveness of the intervention; (2) the methods used for such assessment; and (3) the reliability and validity of those outcome measures when reported.

Hakkennes and Green (2006) grouped the outcome measures into three main categories of measurement, at (1) patient level, (2) health practitioner level, and (3) organizational or process level. The outcome measures that focused on patient level were further categorized into those that measure the actual change in health status of the patient, such as mortality, quality of life, and actual symptom change, and those that use surrogate measures of the patient's change in health status, such as patient satisfaction, length of hospital stay, or number of health-care visits and hospitalizations. The outcome measures at the health practitioner level also followed the same pattern of either measuring actual change in health practice of the practitioner, such as their compliance (or noncompliance) with the implemented guidelines, or surrogate measures, such as measurement of the practitioner's knowledge or attitudes. For the organizational or process level, the focus was on measuring change in the health system, such as to cost, policy and procedures, and the time spent by the practitioner. As indicated by the authors, only a small number (20%) of the studies reported the reliability or validity of the outcome measures.